What Two New Papers Mean for Your Encryption Timeline

For years, the quantum threat to encryption came with a comforting caveat: building a quantum computer powerful enough to break modern encryption would require millions of specialized components called qubits, and nobody was close. Two papers published on March 30 and 31 changed that number dramatically.

A team led by Google Quantum AI showed that breaking the elliptic curve encryption used in cryptocurrency would require roughly 1,200 logical qubits and fewer than 500,000 physical qubits, 20 times lower than previously estimated. Separately, a team from Caltech and Oratomic demonstrated a new error-correction approach that could make Shor’s algorithm practical with as few as 10,000 reconfigurable neutral-atom qubits, a range that current laboratory hardware is beginning to approach.

The practical takeaway: the quantum threat to encryption just moved from “someday” to “years, not decades.” If you’re responsible for your organization’s data security, here’s what these papers found and what to do about it before the first federal acquisition deadline arrives in January 2027.

What the Papers Actually Found

Today’s encryption works because certain math problems are too hard for conventional computers to solve in any reasonable amount of time. Quantum computers use fundamentally different physics that could solve these problems quickly. The question has always been: how powerful does the quantum computer need to be?

The Google paper (published March 30, with co-authors from Stanford, UC Berkeley, and the Ethereum Foundation) focused on breaking the secp256k1 elliptic curve used in Bitcoin and Ethereum. The implications extend well beyond cryptocurrency: elliptic curve cryptography (ECC) is the same family of math that secures TLS (the lock icon in your browser), VPNs, and most government communications. Previous estimates said breaking these curves would require millions of physical qubits. The Google team showed it could be done with roughly 1,200 logical qubits and fewer than 500,000 physical qubits. Moreover, the actual computation on a fast-clock superconducting quantum computer would take just 9 to 12 minutes.

The Caltech/Oratomic paper (published March 31) attacked the problem from a different angle: making each qubit do more work through better error correction. Their approach reduces the hardware requirement to as few as 10,000 qubits. For the two encryption standards that protect most enterprise systems:

P-256, the elliptic curve standard used in TLS, government systems, and banking: roughly 26,000 qubits, about 10 days of computation.

RSA-2048, a different encryption algorithm still widely used for email security, digital signatures, and legacy enterprise systems: roughly 102,000 qubits, about 97 days.

To put that in context: current laboratory systems have demonstrated arrays of up to 6,100 atoms, per the Caltech paper’s own assessment. The gap between where the hardware is today and where it would need to be is no longer measured in orders of magnitude. It’s roughly a factor of four.

Why These Numbers Dropped So Fast

When QuSecure first started working with the Air Force six years ago, the standard estimate for breaking RSA-2048 was around 4,000 error-corrected qubits and 20 million physical qubits. In the years since, quantum hardware has scaled faster than many expected, and advances in error correction have progressively brought the required scale down. The papers published this week represent the most significant breakthroughs to date: the estimated physical qubit requirement for breaking RSA-2048 has dropped from 20 million to roughly 102,000.

The short version of how: a breakthrough in error correction.

Quantum computers are fragile. Their qubits make errors constantly. The standard way to handle that has been to dedicate roughly 1,000 physical qubits just to keep a single “logical” qubit (the one actually doing useful computation) running reliably. That 1,000-to-1 overhead is why previous estimates required millions of qubits.

The Caltech team demonstrated a newer error-correction method called qLDPC (quantum Low-Density Parity Check) codes that cuts that overhead by a factor of 161. Some configurations bring the ratio down to 5-to-1. That single advance is what collapses the hardware requirement from “science fiction” to “a few years of engineering.”

The engineering challenges ahead (building larger qubit arrays, maintaining accuracy at scale) are significant. However, they’re engineering problems with visible paths forward, not physics breakthroughs that may or may not arrive.

The Business Case Just Changed

Three data points that belong in your next board briefing:

The cost of discovery is manageable. The SEC’s Post-Quantum Financial Infrastructure Framework (submitted September 2025) documents a case where a global investment bank ran a full audit of its encryption and found 47,000 cryptographic assets, 1,200 critical systems, and 340 high-risk dependencies. The full migration budget: $12 million. Expensive, but plannable. The SEC framework contrasts this with the cost of emergency migration after a quantum event. It describes that cost as orders of magnitude higher.

The macroeconomic exposure is real. The Citi Institute’s January 2026 report, co-authored by QuSecure’s Rebecca Krauthamer and Garrison Buss, modeled a single-day quantum attack on one top-five U.S. bank’s Fedwire access. The estimated impact: $2 to $3.3 trillion in GDP at risk. That’s 10 to 17 percent of U.S. GDP from one attack on one institution.

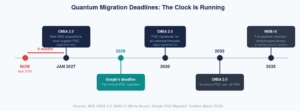

The deadlines are already set. The U.S. government has published a detailed migration timeline for all national security systems. CNSA 2.0, the NSA’s cryptographic modernization standard, prohibits new acquisitions into national security systems that do not support post-quantum cryptography as of January 1, 2027. That’s nine months away. By the end of 2030, all software and firmware must use quantum-resistant signatures, and equipment that can’t be upgraded must be retired. By 2033, quantum-resistant encryption must be the exclusive standard across all national security systems. And NSM-10, the White House national security memorandum that gives these timelines their enforcement mandate, requires full quantum-resistant infrastructure by 2035. Google has publicly committed to migrating its own infrastructure by 2029. When the company that operates one of the world’s largest quantum computing programs sets its own migration deadline, that signal is worth reading.

It’s Already Been Done

The most common objection from security teams is that quantum-safe migration is too complex, too risky, or too early. A four-month pilot between Banco Sabadell and QuSecure disproved all three.

Banco Sabadell deployed QuSecure’s QuProtect platform to test every NIST-approved post-quantum algorithm, including ML-KEM (now one of the NIST PQC standards), across its customer-facing web portal infrastructure. The results: zero downtime during migration windows, minimal performance impact, full compatibility with legacy infrastructure without rip and replace, and the ability to switch between quantum-safe algorithms through QuProtect’s graphical interface. The pilot is cited in the SEC’s Post-Quantum Financial Infrastructure Framework (Section 7) as a “benchmark for industry-wide adoption”.

The key insight from that pilot: you don’t need to overhaul your infrastructure before starting. QuProtect deploys at the network layer, wrapping existing systems in quantum-safe encryption without touching application code. Importantly, you don’t need to finish your encryption audit before starting a pilot. These can run in parallel.

What to Do This Quarter

Run a cryptographic discovery. Map encryption dependencies in your environment: certificates, keys, protocols, libraries, embedded systems. The SEC framework’s 47,000-asset discovery gives you a realistic sense of scale.

The Most Important Misconception Debunked: Don’t wait to finish your inventory and planning before piloting. Start a focused pilot in parallel. Pick one application, one network segment, or one critical system. Use the pilot to build internal expertise, measure real performance impact, and create the evidence base for broader rollout. Piloting while discovery is still underway is how the highest-performing organizations are approaching this. Therefore, avoiding millions in misappropriated spending based on theory rather than practice.

Pick a solution that gives you algorithm flexibility. The first set of post-quantum encryption standards was finalized in 2024, but the landscape is still evolving (additional standards are expected soon). You need the ability to swap algorithms without re-architecting your infrastructure. Network-layer solutions that sit between your applications and the wire deliver this. It works without touching application code or requiring you to upgrade legacy infrastructure.

Brief your board. The conversation about quantum risk has shifted from theoretical to operational. These two papers, combined with federal timelines that begin in nine months, give leadership teams enough concrete information to make resourcing decisions. The organizations that start now will have the luxury of migrating on their own schedule. The ones that wait will eventually be migrating on someone else’s.

At QuSecure, we make it our job to make you successful. Reach out to learn why we’ve been named the Global Product Leader in post-quantum cybersecurity.

QuSecure’s QuProtect platform deploys quantum-safe encryption at the network layer, enabling cryptographic discovery, remediation, and ongoing algorithm agility without infrastructure overhaul. Learn more at qusecure.com.